SEO basics in React

SEO is a crucial aspect of a website's online presence. Today's technology landscape is rapidly evolving and tools such as React.js, which allows for SEO optimisation, are the answer you may be looking for in tackling such challenges. The focus of this article is on the technical aspects that allow for effective improvement of website visibility in search engine results.

We will discuss strategies that are tailored to the React environment and provide practical tips on how to optimise the code structure, URLs, indexing and other key elements that affect the search engine ranking of a web site. Our analysis will focus on source code issues to ensure optimal support for search engine algorithms.

What is SEO?

Generally speaking, SEO (Search Engine Optimisation) is the process of optimising a website for search engines such as Google. The aim of SEO is to prepare a website so that it appears in the highest possible position in search results. SEO is primarily concerned with the technical preparation of a website so that it can be crawled by indexing robots, and the structuring of relevant content that is useful to potential customers.

Effective SEO optimisation leads to increased visibility in search results, attracts potential customers and improves conversion rates. Although it is a time-consuming process, it can bring significant benefits to your site!

How to improve SEO in React?

There are several ways to do this, and this article we will list and briefly describe some of them. We will focus mainly on the technical preparation of the site, ignoring issues such as on-page content and keywords.

Firstly, to improve on-page SEO it is important to meet certain criteria, here are some of them:

- Using semantic HTML

- Accessibility

- Metatags

- Using HTTPS

- Friendly URL

- robots.txt file

- sitemap.xml file

Using semantic HTML

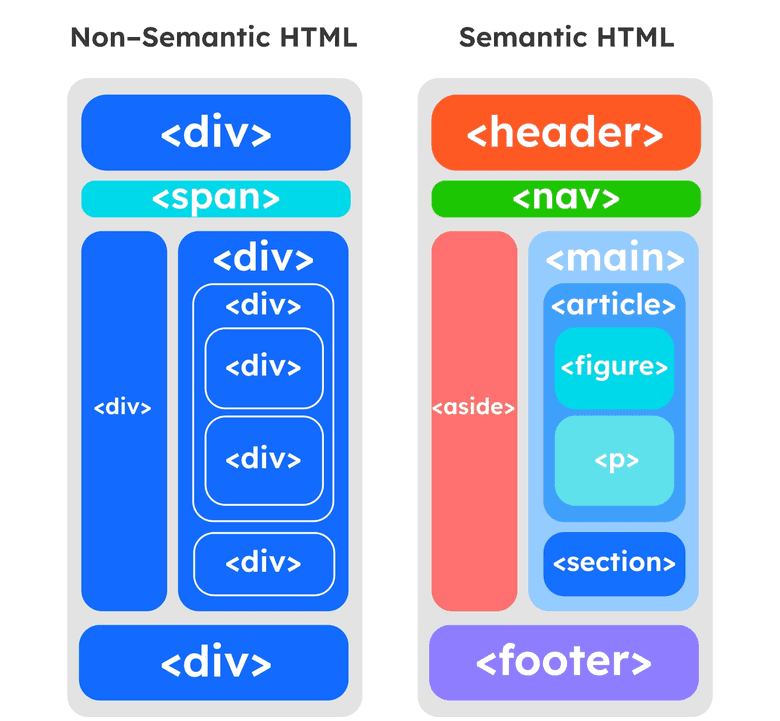

To help search engines understand the structure and content of your site, you should always use semantic HTML. By using appropriate tags for headers <header />, main content <main />, footers <footer />, sections <section />, articles <article /> and also header tags <h1 /> - <h6 />, you allow Google's robots to scan the page appropriately and, so to speak, you indicate to them a certain hierarchy of importance of the content on the page, for example, the <h1 /> tag should only be used once in the title for the most important content on the page. The other tags should be chosen according to the hierarchy of importance. For example, the <h4 /> tag should not be larger than say, the <h2 /> or <h3 /> tags - the size of the tags should be decreasing.

Using only tags such as <div /> or <span />, will result in the fact that the indexing robot would not be able to index the page correctly and understand its content.

The diagram above shows two cases - the first with only <div /> and <span /> tags, in the second case the HTML structure is semantic, in this case the indexing robot is better able to understand the content on the page.

Accessibility

Improving the accessibility of a site is another factor that helps SEO, one can even say that it is one of the foundations of good SEO.

To ensure good accessibility on your site, it is worth following some important guidelines:

- Use semantic HTML to ensure the readability and clarity of the page, so that content is clear and easy to understand;

- Placing alternative text for images, videos and multimedia - using the

altattribute; - Use colours that are easy to read and have adequate contrast:

- The contrast between plain text and background should not be less than 4.5:1;

- The ratio between large text and background should not be less than 3:1;

- Enable people with reduced mobility to access the content of the site using the keyboard - the site should be operable using the keyboard only. To this end, it is worth using the aria-* attribute in the HTML code, which allows certain elements to be skipped using, for example, the tab key.

By ensuring the accessibility of the site, it will be usable not only by healthy people, but also by people with disabilities, both motor and visual. In general, the most important feature of a site's accessibility is its ease and intuitiveness. Non-intuitive and difficult to use pages can lead to user frustration, which would result in reduced traffic to the site - an undesirable condition. Another issue is Google's robots. Poorly designed and difficult to use pages can make it difficult for robots to properly decode the content on the page, resulting in a low overall score and a low position for the page in Google. So how do you design a site correctly from this point of view? Be based on accessibility standards, such as WCAG.

Metatags

These are elements of code placed in the <head /> section of an HTML document. These elements are not visible on the page, but play an important role in communicating with search engines such as Google.

Metatags allow robots to better understand the theme and content of the page, which translates into better search engine rankings.

The main meta tags are

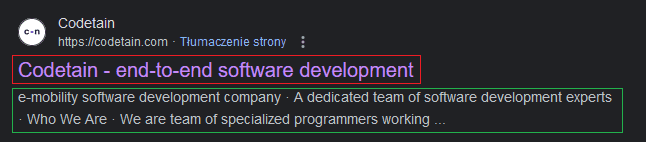

titletag - a unique tag for each post, describing exactly what is on the page.descriptionmetatag - a tag whose purpose is to describe exactly what is on the page in the best possible way.

At first glance, these tags do the same thing. An important difference is that the description metatag does not affect the SEO of the page, it is mainly information for the user - the better and more interesting the description, the greater the chance that the user will click on the link and enter the page.

As a curiosity I would like to add that there are metatags prefixed with og: (OpenGraph) - they are indexed by Facebook every time you share a link via Facebook or Messenger, another group of metatags are those prefixed with twitter: - the situation is similar to the previous one, but the indexing occurs after sharing the link via Twitter.

The image above shows what the metatags look like in practice. The red colour is the content of the title tag, while the green colour is the description metatag.

Using HTTPS

Having an SSL certificate on the site does not necessarily have a key effect on SEO, but it certainly has a positive effect on it. Currently, having an SSL certificate is something of a standard and it is hard to find an example of a site without one, so this point can be treated as something of a curiosity.

Friendly URL

A friendly URL is one that is easy for a human to understand, so that the person seeing it can easily find out where on the page they are. In addition, a friendly URL has another advantage, which is that it makes it easier for Google bots to index the content of the website.

Example of a friendly URL: https://blog.com/example-article

Example of an unfriendly URL: https://blog.com/kld7asd812121gyqw

robots.txt file

The robots.txt file is a text file containing instructions to search engine robots about what content a site should index. This allows a site owner to control the movement of search engine robots on their site, such as allowing access to certain resources and blocking others that are irrelevant from an SEO perspective.

One thing to keep in mind is that the robots.txt file does not completely block access to the site, it is a kind of information, a hint to the indexing robots as to which resources should be indexed and which can be omitted.

Several commands can be used in the robots.txt file, including but not limited to:

User-agent- defines which search engine robots you want to target for specific pages.Disallow- defines which pages should not be indexed by the robotAllow- defines which pages should be indexed by the robot.Sitemap- indicates the site map address, which makes it easier for search engine robots to browse the site.

sitemap.xml file

A sitemap is an xml file that helps search engine robots crawl pages (sitemaps are usually used for large web applications with many sub-pages). What exactly is a sitemap.xml file? It is nothing more than a list of the sub-pages of a page that should be scanned by indexing robots, so that the sub-pages have a better chance of being displayed in the search engine.

The sitemap.xml file has a specific structure and consists of certain tags, I will only mention the most important ones:

urlset- the tag that opens the sitemap fileurl- tag which contains the url of the subsite, and other tags such as the tag specifying the last modification on the subsiteloc- tag indicating the address of the subpagelastmod- the date of the last modification. This allows the robot to know if the content has been changed since the last scan, it is important to put this tag on pages that have multiple subpages, so the robot will know if the subpage has been modified since the last scanchangefreq- a tag that determines how often the content on this subpage changes

Now a little practice

As an example, we will use a simple blog application written in the React library. The application is divided into a frontend part written in React and a backend part - the backend provides endpoints with data that are displayed on the UI. I add this information to indicate that Server Side Rendering (SSR) is not used in this case.

The application consists of a page with a list of articles, and a sub-page of the article of your choice.

Link to demo: https://blog-frontend-xulx.onrender.com/

Link to github repo: https://github.com/kaczorowskid/blog-frontend

Using semantic HTML (Click to go to the repository)

The site was initially intentionally written in a non-semantic way, only the <div /> and <span /> tags were used, which is a mistake, because indexing robots are not able to correctly understand the content that is on the page. The following tags were added:

- headings

<h1 />,<h2 /> - header

- main

- article

- section

- footer

With a few simple changes, the site has become more understandable to indexing robots.

Accessibility (Click to go to the repository)

A number of changes have been made to make the application accessible to people with disabilities. The site can be used using only the keyboard - the tab key and the arrow keys.

Metatags (Click to go to the repository)

The most popular package for metatag operations for React was used react-helmet

To check that the metatags have been added correctly, it is worth checking the source of the page in the browser's development tools. They should be visible in the <head></head> section. The indexing robots will thank you :)

Using HTTPS

The hosting on which the deploy of the site was done defers the SSL certificate by default, so I omit this point.

Friendly URL (Click to go to the repository)

To implement the friendly URL functionality, an additional field path has been added to the database. The friendly URL should be easily understood by a human and therefore by a crawler, as opposed to a string of characters which is the id generated by the database.

Link to the article before the change: https://blog-frontend-xulx.onrender.com/article/65f70abd95344172a306d489

Link to article after change: https://blog-frontend-xulx.onrender.com/article/the-rise-of-artificial-intelligence-in-healthcare

As you can see, the change is significant and thanks to it a person browsing the page and the indexing robots are able to perfectly understand which resource they are currently in.

robots.txt file (Click to go to the repository)

Due to the fact that the application is very simple, consisting of a main page and one subpage, it is not necessary to block any view in the robots.txt file. Therefore, the file will look as follows:

User-agent: *

Allow: /

Sitemap: https://blog-frontend-xulx.onrender.com/sitemap.xml

sitemap.xml file (Click to go to the repository)

For the same reason as the point above, the sitemap.xml file will be very simple and contain only a few addresses

<?xml version="1.0" encoding="utf-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://blog-frontend-xulx.onrender.com</loc>

<lastmod>2024-03-27</lastmod>

</url>

<url>

<loc>https://blog-frontend-xulx.onrender.com/article/introduction-to-hooks-in-react</loc>

<lastmod>2024-03-17</lastmod>

</url>

<url>

<loc>https://blog-frontend-xulx.onrender.com/article/world-of-cryptocurrencies-an-overview</loc>

<lastmod>2024-03-13</lastmod>

</url>

<url>

<loc>https://blog-frontend-xulx.onrender.com/article/the-rise-of-artificial-intelligence-in-healthcare</loc>

<lastmod>2024-03-11</lastmod>

</url>

<url>

<loc>https://blog-frontend-xulx.onrender.com/article/the-future-of-quentum-computing-unlocking-boundless-potential</loc>

<lastmod>2024-03-11</lastmod>

</url>

</urlset>

Summary of results

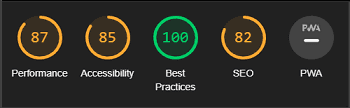

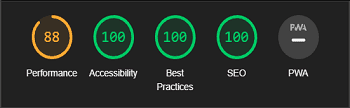

To test on-page SEO, I will be using Chrome's built-in Lighthouse, as for the reason that it does not take into account factors such as keywords or on-page content, for example, when evaluating SEO.

| BEFORE | AFTER |

|---|---|

| branch before changes | branch after changes |

|

|

Summary

React stands as a formidable choice for crafting modern web applications, offering unparalleled flexibility and efficiency in managing complex user interfaces. However, when it comes to ensuring optimal search engine visibility, developers must navigate React's limitations in Server Side Rendering (SSR) and prioritize strategic SEO implementation.

Despite React's inherent lack of SSR support, it remains a potent tool for building SEO-friendly applications through meticulous attention to detail. By optimizing meta tags, prioritizing content accessibility, and caring about semantic HTML, developers can enhance the discoverability and indexing of React-based projects on search engines.

While frameworks like Next.js provide seamless SSR integration, React developers can still achieve competitive SEO performance by employing supplementary tools and techniques. By staying abreast of SEO best practices and continuously refining their approach, developers can maximize the organic visibility and user engagement of React applications.

In conclusion, while React may pose challenges in achieving optimal SEO performance due to its SSR limitations, it nonetheless offers a wealth of opportunities for creating engaging and discoverable web experiences. By leveraging React's strengths and complementing them with strategic SEO practices, developers can unlock the full potential of this powerful library in driving organic traffic and fostering user engagement.

Photo by rawpixel.com on

freepik.com